|

With the economic downturn since COVID-19, private brands have experienced a remarkable surge in popularity within the US marketplace. Once seen as generic alternatives, private brands have transformed into trusted and desirable options for consumers seeking quality, value, and innovation. Let’s explore the impressive growth of private brands over the past year, delve into consumer shopping preferences, identify the most popular private brand product categories, and highlight the pivotal role market research plays in empowering private brands to thrive.

The Growth of Private Brands The growth of private brands in the US market has been nothing short of extraordinary. Over the past year, private brands have witnessed significant expansion, capturing a larger market share and earning a loyal customer base. According to recent industry reports, private brand sales have grown by 11% in the last year alone, outpacing the growth of national brands. This surge reflects a shift in consumer perception, as private brands are no longer viewed as inferior alternatives but rather as competitive, high-quality options. Consumer Shopping Preferences for Private Brands One of the driving forces behind the rise of private brands is the evolving shopping preferences of consumers. Today's consumers are more value-conscious, seeking affordable yet high-quality products. Private brands excel in meeting these demands by offering competitive pricing without compromising on quality. A growing number of consumers perceive private brands as trustworthy and reliable alternatives to national brands, often choosing them for everyday essentials and even premium products. Consumers appreciate the innovation and uniqueness that private brands bring to the table. They are drawn to private brands for their ability to introduce new and trendy products that cater to specific consumer needs and preferences. This adaptability and agility in product development and differentiation contribute to the growing appeal of private brands. Generational and Demographic Preferences Understanding consumer behavior across different generations is crucial for private brands to tailor their strategies effectively. Millennials appreciate the affordability, quality and innovation of private brands while Gen Z is attracted to the value proposition, authenticity, and customization possibilities they offer. Baby Boomers like the cost savings, familiarity and quality assurance. Alongside generational trends, demographic factors such as income levels, household size, and education also influence private brand purchases. Studies have shown that consumers with lower incomes are more likely to buy private brands due to their cost-effectiveness. Larger households with children tend to have higher private brand adoption rates, as these brands offer value and cost savings when buying in bulk. Educational attainment can also play a role, as more educated consumers may be inclined to compare options and make informed decisions, leading to a higher likelihood of purchasing private brands. Popular Private Brand Product Growth Categories Several product categories have experienced notable growth in private brand offerings such as:

Market Research Empowers Private Brands Market research plays a vital role in the success of private brands. By employing robust research methodologies, private brand companies gain invaluable insights into consumer preferences, market trends, and competitive landscapes. Here are a few ways research can help private brands:

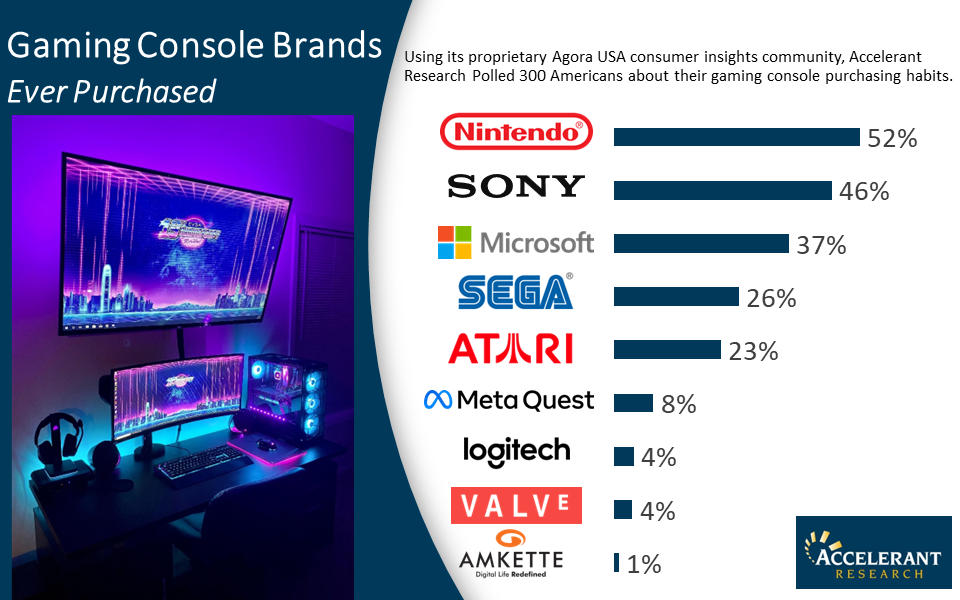

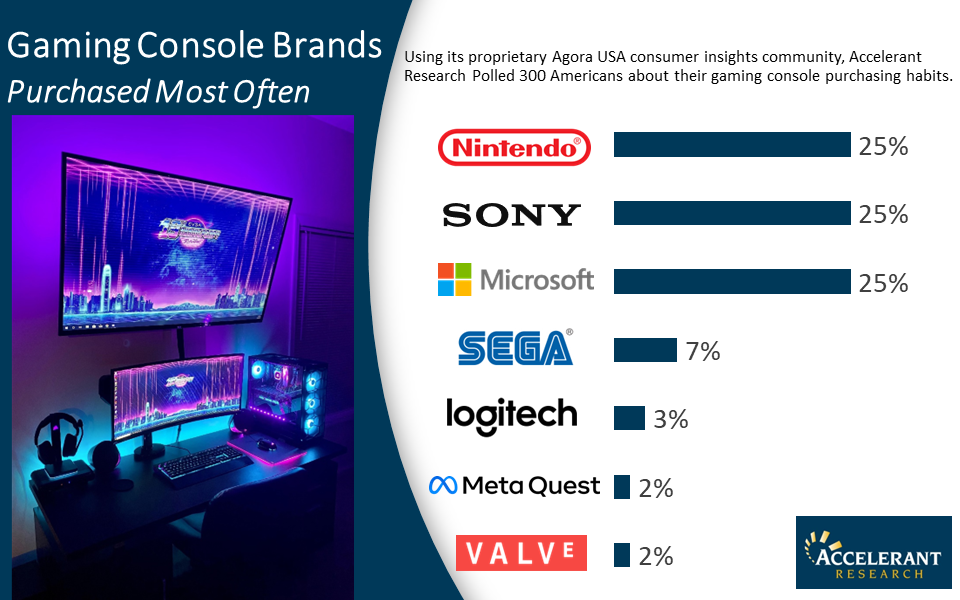

The rise of private brands in the US marketplace is a testament to their increasing popularity and consumer trust. By understanding consumer shopping preferences, focusing on growth categories, and leveraging market research insights, private brands can continue to thrive and capture a larger share of the market. Research serves as a powerful tool, providing the necessary intelligence to develop targeted strategies, innovate product offerings, and build enduring relationships with consumers. Using our proprietary online insights community, Agora USA, Accelerant Research surveyed 300 American consumers about their gaming console brand awareness, usage, and perceptions. BRAND FUNNEL FLIPBOOK: NET PROMOTER SCORES: Marketers and Market Research Agencies have a lingo all their own, but some terms that are intuitive to us leave consumers scratching their heads during discussions. Brand Personality can be one such phrase. Consumers sometimes view their relationships to brands in rational terms, and when asked to describe the personality or persona of a brand, initial responses may be heavy on product traits, experience, and price. While that information is valuable, it doesn’t illustrate the consumer’s emotional view of the brand landscape, and sometimes, that’s where the real insights gold is buried. Time to go prospecting.

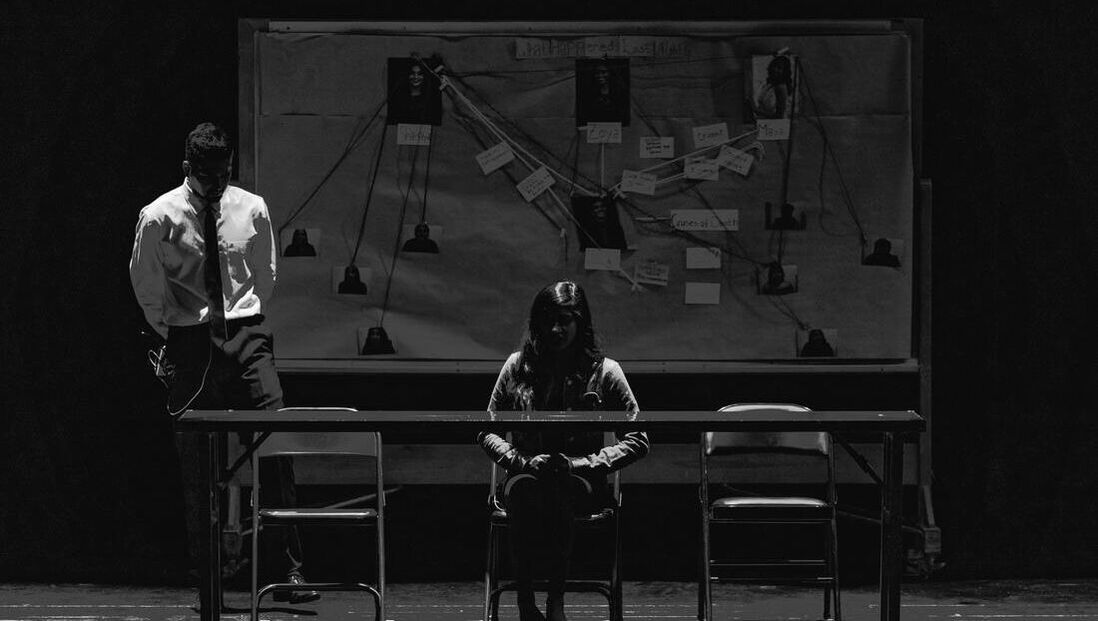

Discussions of brand persona are a nice opportunity to dust off what may be the most classic of projective techniques in the marketing research arsenal: describing brands as people. Asking participants to imagine the brands they use as fictional characters, what they look like, how they dress, and what types of personalities they have, frames brand persona in terms consumers can understand without the need for a detailed explanation that bites into valuable discussion time. In addition to being both fun and insightful, it also naturally lends itself to a host of probes if descriptions for different brands seem highly similar, widely different, or you have the desire to dig a bit deeper into what certain characteristics mean to a consumer. Since the exercise is so common, it’s sometimes tempting to cast around for a unique set up or one that fits neatly with the research topic at hand. There are dozens in use: brands as people in elevators, attending parties, dramatis personae of a play, or marooned on desert islands. Generally speaking, it is sometimes best to lean away from any setups that have any built-in cultural or demographic context. Though it seems counter-intuitive, a very generic set up can avoid inadvertently tapping into any preconceived notions your participants have about what type of people frequent certain environments. The goal of the projection is to offer a completely blank slate for participants to fill in as they will. If you’re doing research, for example, with coffee, it might seem natural to ask your participants to imagine brands as folks in a coffee shop; but you run the risk of precluding unrestrained creative thinking if a given participant imagines coffee shop goers to be younger and tech-savvy as a rule. Framing the task as describing brands as supermarket shoppers seems like a good fit for the grocery category, but for some of your participants, glamorous jet setters might then be off the table even if that perfectly describes how they would think of a certain line of upscale crackers. Likewise, castaways on a desert island may have some respondents trying to shoehorn their brands into the closest equitable Gilligan’s Island character, and you lose quite a bit of nuanced imagery as participants try to force-fit roles artificially. A brand will end up as Gilligan, fit or no. On the analysis end of the spectrum, the brand as person exercise provides rich descriptive imagery, both illuminating and worthy of summarizing. Here’s another place to use some caution though. Consumers are complicated and when interpreting their responses to the exercise, trained insights professionals and marketers know it’s best to avoid assigning personal judgments about whether certain persona characteristics are positive or negative. Take for example, a brand described as young. Is that a good thing or a bad thing? One consumer might tie youth to high energy, which they see as a positive; another may equate youth with inexperience, which they view as a negative. Any value judgments on dramatis personae should come from the consumer themselves in the way they frame and describe the context of their responses as they respond to follow-ups. The brands as people projective exercise has long been a moderator staple for good reason. It’s well worth the relatively short time investment and moves discussion along to deeper emotional insights more quickly than direct questioning. And it’s hard to argue the outputs. There is strong value in understanding the below surface emotional and aspirational lenses through which consumers view the brands with which they’ve built relationships. Context of the projective exercise task and interpretation of the descriptive responses, however, can be critical to successful use. If you’re looking for some skilled moderators for your next qualitative project, we invite you to give us a call (704-206-8500) or send us an email (info@accelerantresearch.com). With our support and guidance in participant recruiting, technology/logistics management, and moderating/full-service support, Accelerant Research can provide you with similarly successful and impactful insights.

Gen Z is the group following on the heels of Millennials and as they enter into their 20’s, are becoming of greater interest on everyone’s radar screens; but who are they and what makes them unique? Gen Z includes individuals born between 1997 and 2012. The average Gen Zer got their first smartphone just before their twelfth birthday. They’re the most diverse generation in American history in terms of ethnicity and race, predicted to become majority nonwhite by 2026. They make up 40% of the global consumer population, so it’s no wonder Gen Z’s spending power has reached $360 billion. They are expected to make up over 41 million of U.S. digital buyers by the end of 2022. Let’s look at Gen Z’s characteristics and shopping habits to understand more about them. They grew up on the internet. This tech-savvy generation is used to being connected to the internet from an early age.

They research and discover new products on social media. Gen Z turns to social media for research and finding new products. They are more likely to discover new products in feeds, ads and influencer posts on social media, and are more likely to be influenced to purchase after seeing a product promoted by a brand on social media.

They care about social responsibility. Gen Z is an extremely diverse crowd who has strong opinions on various social issues, making them want to purchase from brands that get involved in improving society. They look for actions like encouraging recycling, protecting the environment, sustainability, or taking a stand on equality and racial justice. However, brands must be genuine about it and commit to it wholeheartedly because they will quickly dismiss whatever comes across as fake, irrelevant or misleading.

They care about their privacy. Gen Z does not appreciate ads that rely on personal data, and they care about their privacy. They are cautious when it comes to divulging information on health and wellness, location, personal life, or payments because they have grown up in an era of widespread cybercrime.

They care about mental health. Many Gen Zers can remember growing up during the recession of 2009 and the impact it had on their parents. Fast forward several years, and major milestones in their lives were greatly impacted by the pandemic. With an increasing number of people needing support systems, products and services that help mental awareness are appealing to this group.

They have a shorter attention span. According to Entrepreneur and UX Collective, Gen Z has an average attention span of about 8 seconds compared to Millennials who were considered to be distracted with an average attention span of 12 seconds. Leading social media platforms have developed advertising options for this audience from Vine’s 6-second videos, Snapchat’s 10-second story limit, to YouTube’s 6-second pre-roll ads.

This target audience is unique, with different pain points and purchasing behaviors. If you’d like to learn more about research among Gen Z or any other target audience, let’s have a chat. |

Archives

March 2024

|

Services |

Company |

ACCELERANT RESEARCH | 1242 MANN DRIVE, SUITE 100 | MATTHEWS, NC 28105

© COPYRIGHT 2024. ALL RIGHTS RESERVED. |